Science as distributed open-source

knowledge development

Digital Total at University of Hamburg

October 10, 2023

About

Me

🔬 Position: I am a Postdoctoral Research Scientist in the Research Group “Mechanisms of Learning & Change” at the Institute of Psychology at the University of Hamburg

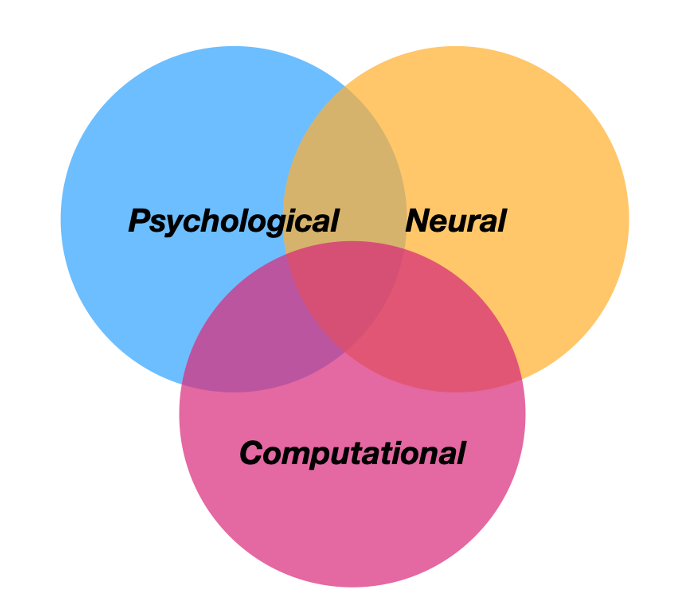

🎓 Education: BSc Psychology & MSc Cognitive Neuroscience (Technische Universität Dresden), PhD Cognitive Neuroscience (Freie Universität Berlin)

🔗 Contact: You can connect with me via email, Twitter, Mastodon, GitHub or LinkedIn

ℹ️ Info: Find out more about my work on my website, Google Scholar and ORCiD

This presentation

💻 Slides: https://lennartwittkuhn.com/digital-total

Source: https://github.com/lnnrtwttkhn/digital-total

📦 Software: Open & reproducible slides built with Quarto and deployed to GitHub Pages using GitHub Actions

License: Creative Commons Attribution 4.0 International (CC BY 4.0)

Contact: I am happy for any feedback or suggestions in person at this event, via email or GitHub Issues. Thanks!

Agenda

- Digital Research & Digital Teaching

- Science as distributed open-source knowledge development

Research on “Mechanisms of Learning and Change”

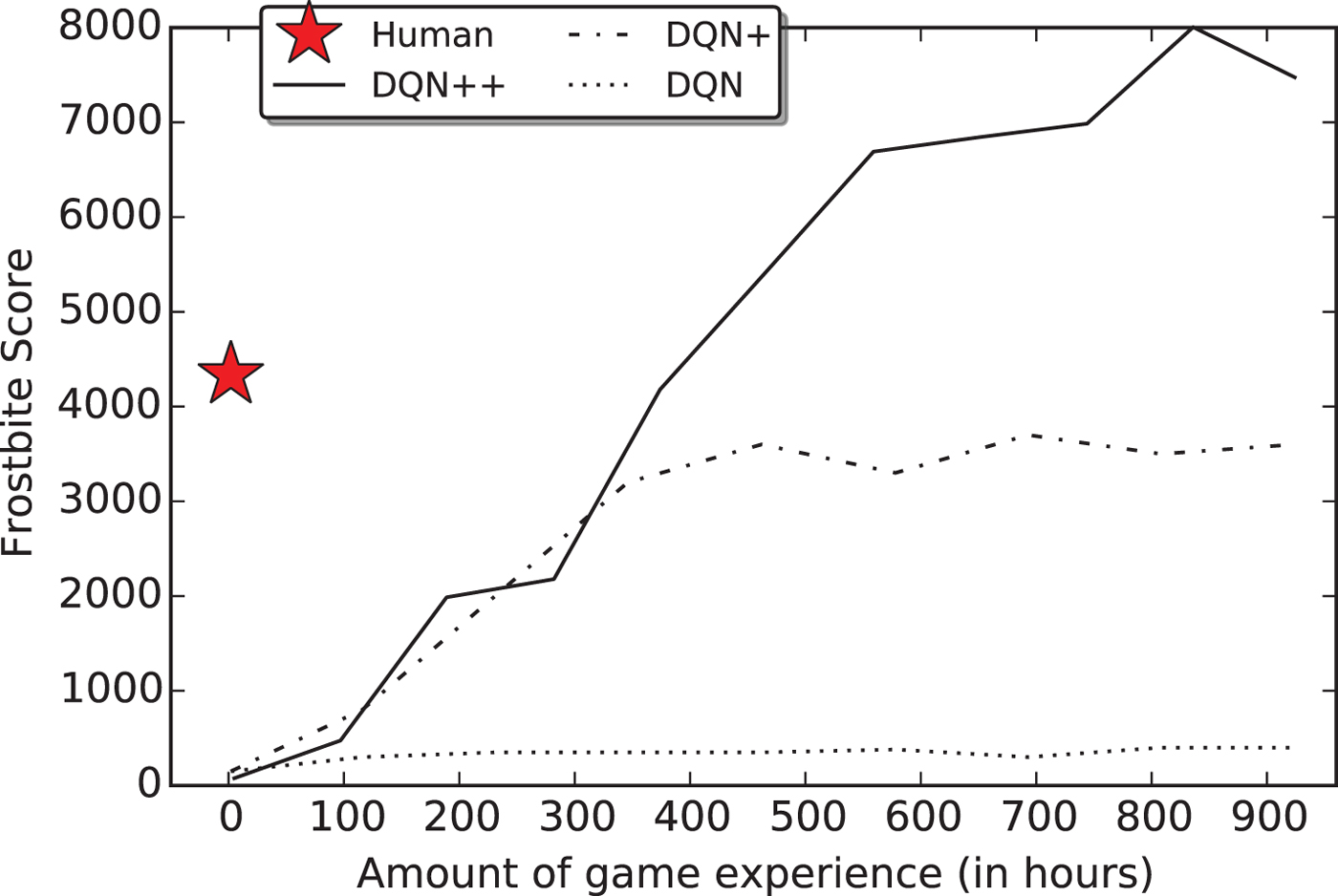

How does the brain use past experience to guide future decisions?

Digital Research

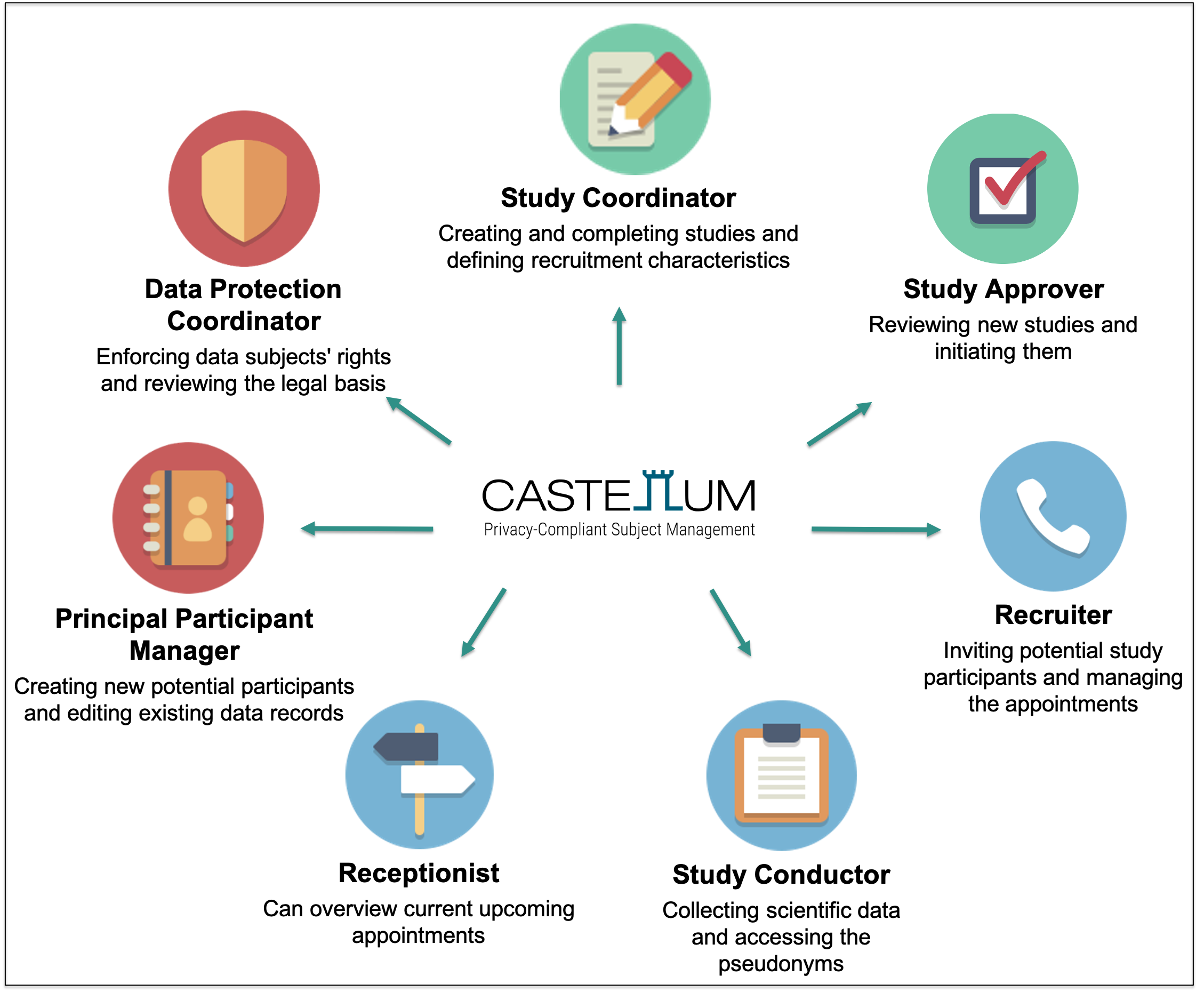

Castellum: Digital, privacy-compliant participant management system

Faculty of Psychology & Human Movement Science

“We optimize the digital recruitment of study participants. We have access to a broad participant database through which participants can be recruited according to specific criteria.” (see Digital Strategy, 2022)

- free to use, digital, open-source

- GDPR-compliant data protection & security

- developed at the Max Planck Society

- growing international user community

Castellum UHH Task Force

- 10 active members (scientific & IT staff)

- On-going consultations with data protection officer

- Project website (in German) with open-source code

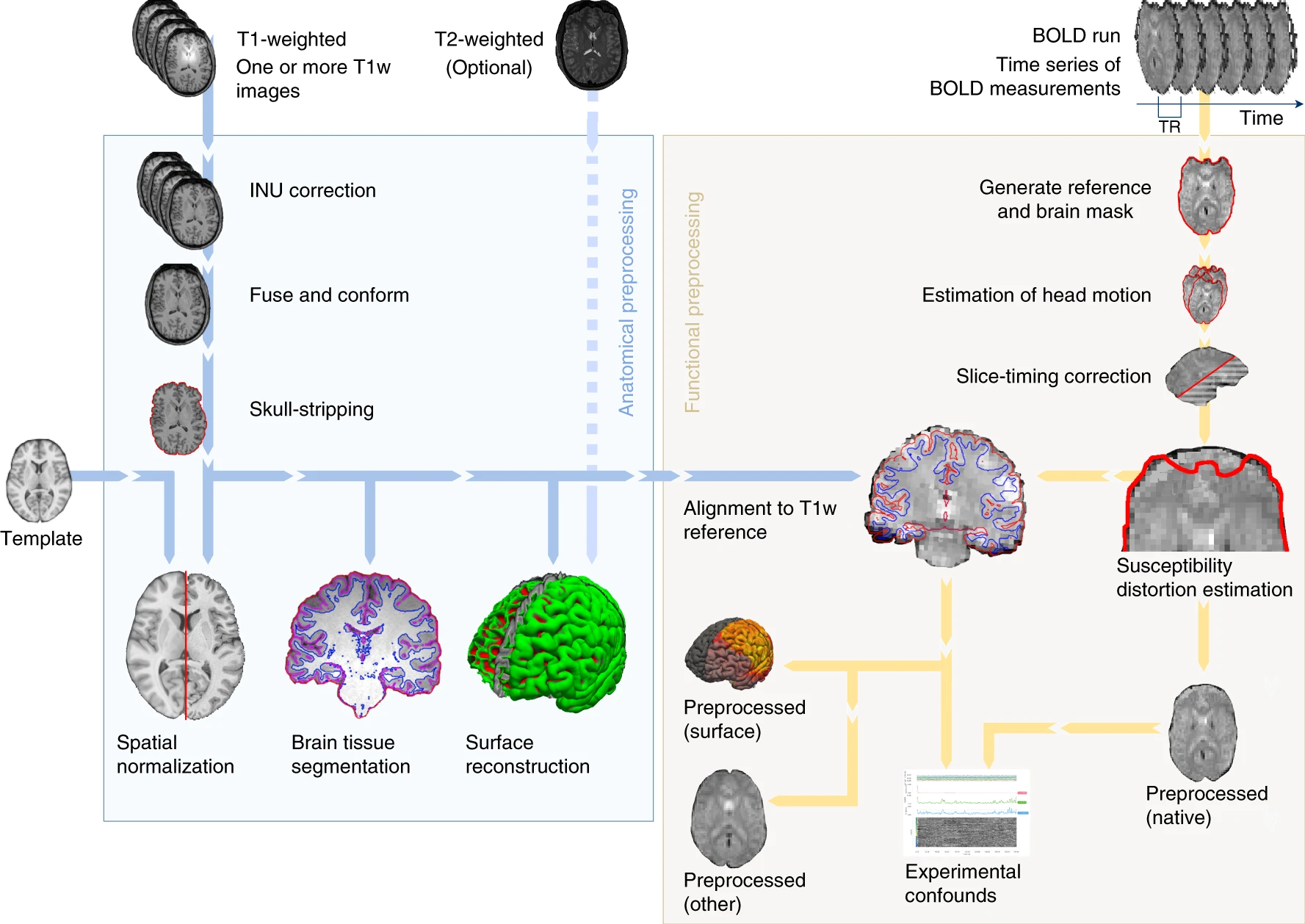

MRI Total: Transparent and reproducible MRI data processing

1. Neuroimaging Data Collection

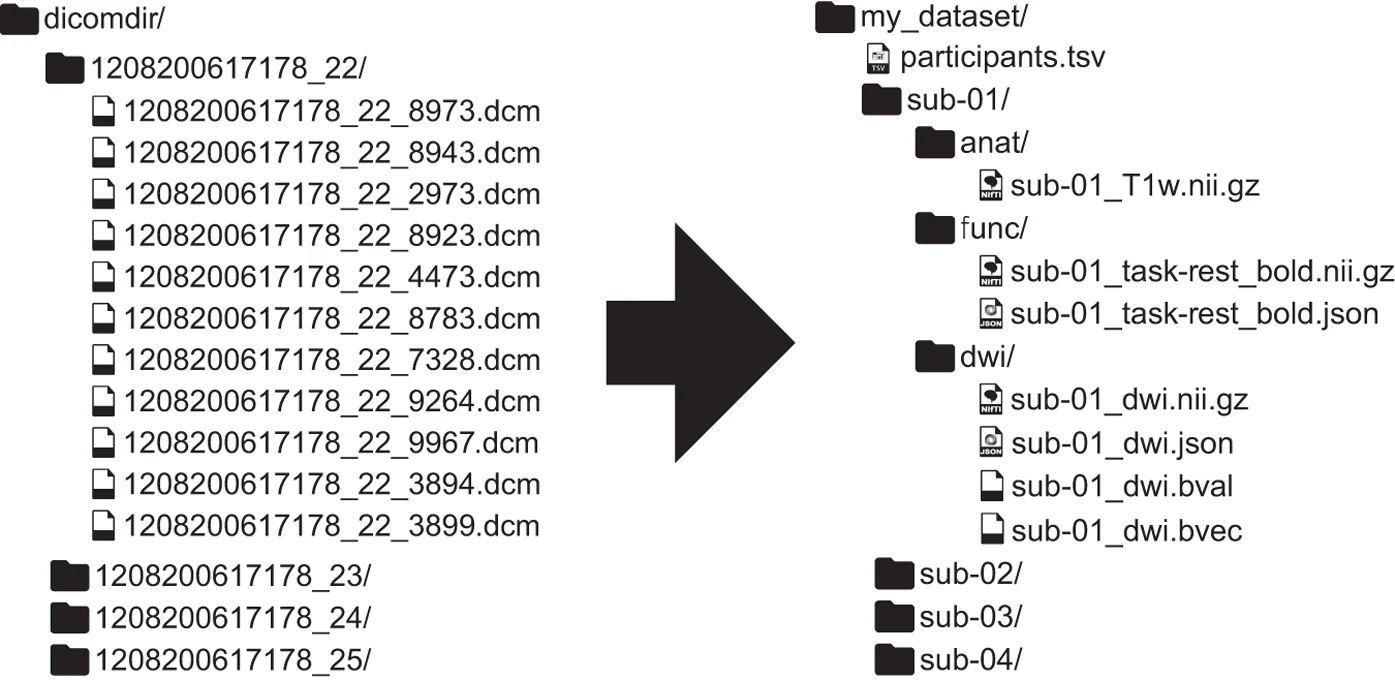

2. Standardization of human neuroimaging data

3. Automated MRI data quality control & processing

4. Open-source software UHH infrastructure

- High-performance computing (using Hummel)

- Distributed data management (using DataLad)

- Data storage on UHH’s Object Storage and RDR

- Containerized computational environments

Digital Teaching

Teaching: Reproducible & FAIR open educational resources (OERs)

- Findable / Accessible: Ensure long-term preservation and get a persistent identifier (e.g., DOI) via data repositories, journal articles or OER registries

- Interoperable: Use plain-text formats (e.g., Markdown) or commonly used formats (e.g., PowerPoint)

- Reusable: Add documentation, metadata and share under an open license (e.g., Creative Commons licenses)

Digital Literacy: A course on “Version Control of Code and Data”

Summary: A hands-on seminar about version control of code and data using Git with curated online materials, interactive discussions, quizzes and exercises, targeted at (aspiring) researchers in Psychology & Neuroscience

Why we need version control …

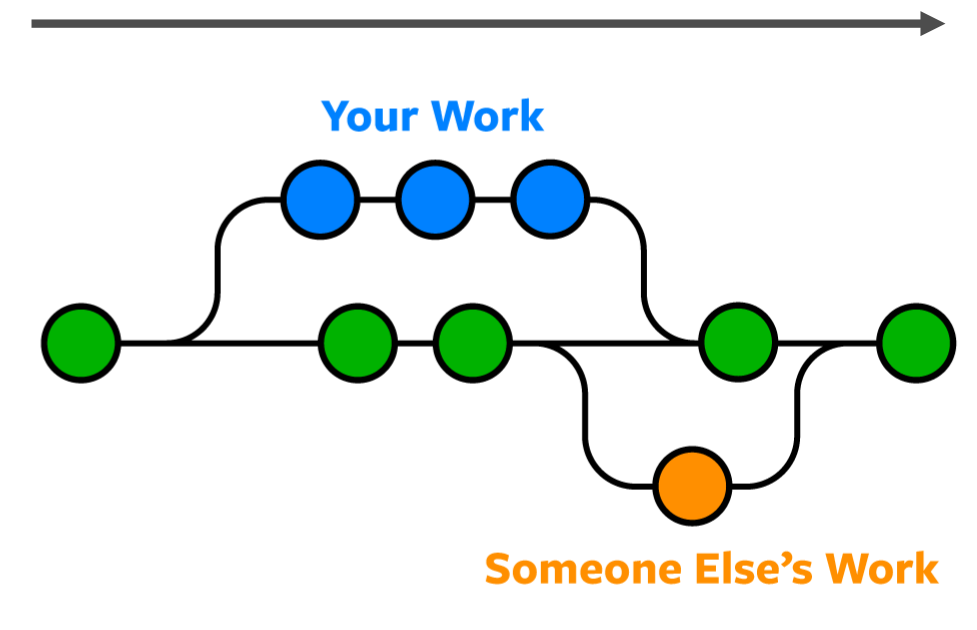

What is version control?

“Version control is a systematic approach to record changes in a set of files, over time. This allows you and your collaborators to track the history, see what changed, and recall specific versions.” (Turing Way)

Science as distributed open-source

knowledge development

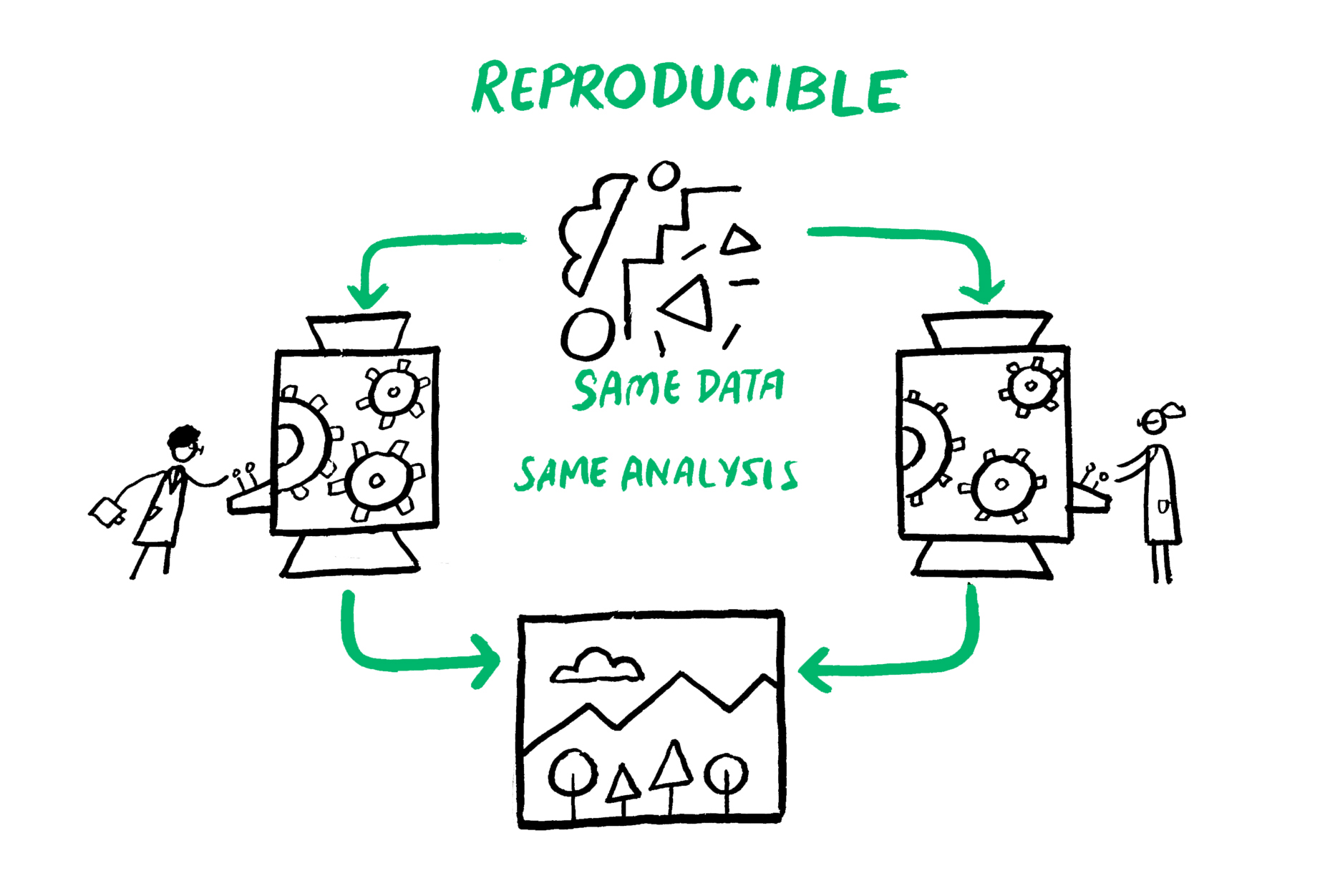

Computational Reproducibility

The problem

Why?

“… accumulated evidence indicates that there is substantial room for improvement with regard to research practices to maximize the efficiency of the research community’s use of the public’s financial investment.” (Munafò et al., 2017)

We need a professional toolkit for digital scientific outputs!

Science as distributed open-source knowledge development 6

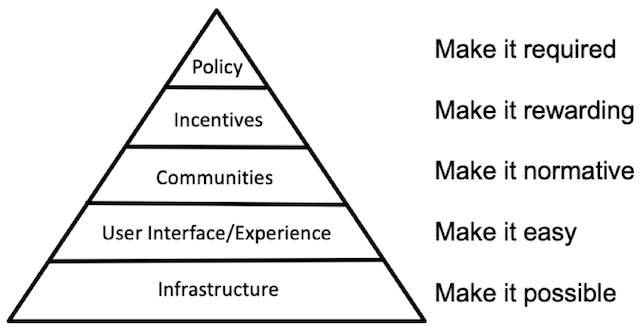

How can we do better science?

The long-term challenges are largely non-technical

- open-source, avoiding commercial vendor lock-in

- adopting new practices and upgrading workflows

- moving towards a “culture of reproducibility” 7

- changing incentives, policies & funding schemes

Technical solutions already exist!

- Version control of digital research outputs (e.g., Git, DataLad)

- Integration with flexible infrastructure (e.g., GitLab)

- Systematic contributions & review (e.g., pull / merge requests)

- Automated integration & deployment (e.g., CI/CD)

- Reproducible computational environments (e.g., Docker)

- Transparent execution and build systems (e.g., GNU Make)

- Project communication next to code & data (e.g., Issues)

Summary

Digital Research

- Castellum: We are setting up a digital, privacy-compliant participant management system

- MRI Total: We are working on an automated and reproducible pipeline for MRI data processing & quality control

Digital Teaching

- FAIR & Reproducible Teaching: We are developing workflows for FAIR, reproducible & open educational resources

- Digital & Data Literacy: We are teaching version control to the next generation of researchers

Science as distributed open-source knowledge development

- We need a professional toolkit for digital research outputs, inspired by open-source software development

References

Thank you!

![]()

Dr. Lennart Wittkuhn

lennart.wittkuhn@uni-hamburg.de

https://lennartwittkuhn.com/

GitHub Mastodon Twitter

💻 Slides: https://lennartwittkuhn.com/digital-total

Source: https://github.com/lnnrtwttkhn/digital-total

📦 Software: Reproducible slides build with Quarto and deployed to GitHub Pages using GitHub Actions (details in the Quarto docs)

🖲️ DOI: 10.25592/uhhfdm.13467

License: Creative Commons Attribution 4.0 International (CC BY 4.0)

Feedback: In person at this event, via email or GitHub Issues

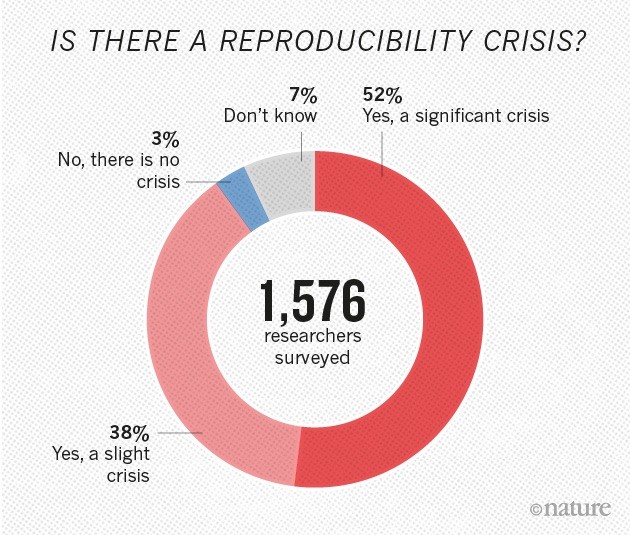

Reproducibility Crisis

N = 1576; Baker (2016), Nature

Science as distributed open-source knowledge development

Footnotes

The Turing Way Community (2022), see “Guide on Reproducible Research”

for example, in Psychology: Crüwell et al. (2023); Hardwicke et al. (2021); Obels et al. (2020); Wicherts et al. (2006)

see Baker (2016), Nature

see e.g., Poldrack (2019)

see Smaldino & McElreath (2016)

inspired by Richard McElreath’s “Science as Amateur Software Development” (2023)

see “Towards a culture of computational reproducibility” by Russ Poldrack, Stanford University